AI tools can feel almost magical the first time you use them. You type a question, press enter, and within seconds you get a full answer, an article draft, or a list of ideas.

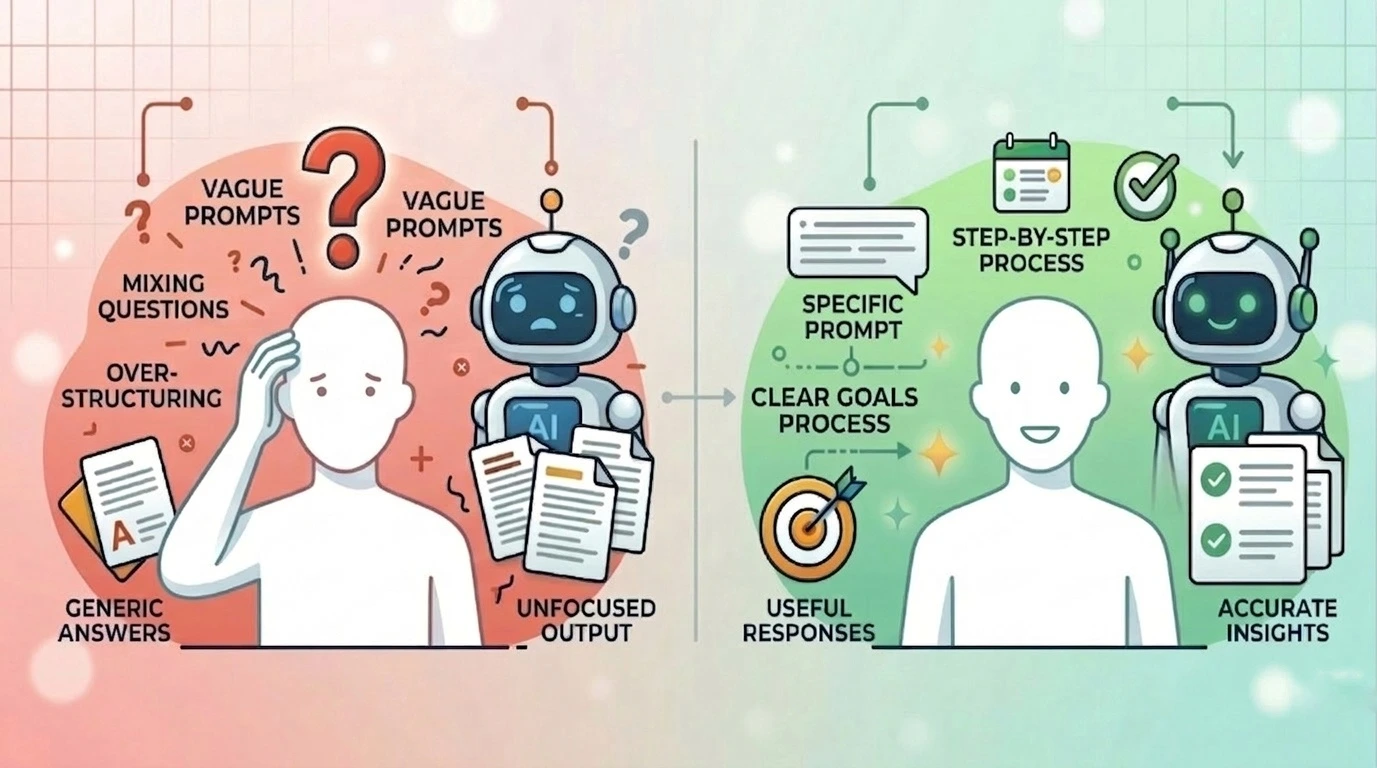

But after the initial excitement, many beginners notice something frustrating. Sometimes the responses feel generic, confusing, or just not what they expected. It’s easy to assume the problem is the AI itself. In reality, the issue is usually how the AI is being used.

Most people start using AI without learning a few simple habits that make a huge difference. Small mistakes — like vague prompts, unclear instructions, or trying to ask too much at once — can dramatically affect the quality of the results.

Once you understand the common mistakes beginners make, you can quickly adjust how you interact with AI and start getting responses that are far more useful, relevant, and practical.

1. Using Vague Prompts

One of the most common beginner mistakes with AI is asking vague questions and expecting precise answers.

Many people type something like:

“Write something about productivity.”

“Give me marketing ideas.”

“Explain AI.”

Technically, the AI can answer these prompts. But the result is usually generic, shallow, and forgettable.

Think of it this way: asking AI a vague prompt is like walking into a library and saying, “Give me a book.” The librarian can give you any book, but it probably won’t be the one you actually need.

AI works best when it has clear direction. Instead of writing a broad prompt, add a little context and specificity. Mention the topic, audience, format, or goal.

For example:

Vague prompt: “Give me productivity tips.”

Better prompt: “Give me 5 practical productivity tips for remote workers who struggle with phone distractions.”

The second prompt tells the AI who the advice is for and what problem it should focus on, which leads to a much more useful answer.

If the prompt is vague, the output will be vague. If the prompt is clear, the response becomes far more helpful and relevant.

2. Mixing Multiple Questions in One Prompt

Cramming five requests into one prompt (SEO explanation, keywords, blog intro, and titles) forces the AI to divide its attention. The result? A messy, shallow output.

It often looks something like this:

“Explain what SEO is, give me 10 keyword ideas, write a blog intro, and also suggest a title.”

Technically, AI can attempt to answer everything. But the result is usually messy, rushed, or uneven. One part may be decent, another part shallow, and something might get ignored completely.

Why does this happen?

Because AI performs best when it can focus on one clear task at a time. When a prompt contains several different requests, the model has to divide its attention between them. The output becomes less structured and less useful.

It is like asking a coworker:

“Can you research competitors, design a logo, write an article, and plan our marketing campaign?”

Even a talented person would struggle to deliver high-quality work for all of that at once.

A better approach is to break the task into steps.

For example:

Step 1: “Explain what SEO is in simple terms for beginners.”

Step 2: “Give me 10 beginner-friendly SEO blog topic ideas.”

Step 3: “Write a blog introduction for the topic ‘SEO for Small Business Owners.’”

This step-by-step approach helps AI produce more focused, higher-quality responses.

The key idea is simple: One clear prompt leads to one clear answer.

3. Over-Structuring Prompts Without Thought

You don’t need to tell the AI to “act as a world-class expert with 20 years of experience” for a simple email draft. Too many constraints make the output feel stiff and robotic.

You’ll often see prompts like this:

“Act as a world-class expert with 20 years of experience. Use a persuasive tone. Write in 7 sections. Each section must contain 3 bullet points. Add examples, statistics, a conclusion, and a call to action.”

In theory, structure helps. In practice, too much structure can box the AI in.

When every detail is tightly controlled, the response can feel stiff, repetitive, or forced. Instead of focusing on the main idea, the AI spends most of its effort just trying to satisfy the format rules.

It’s a bit like giving someone a creative task but also handing them a long checklist of restrictions. The result might technically follow the instructions, but it won’t always feel natural.

Often, a simpler prompt works better.

For example:

Over-structured prompt

“Write an article about morning routines with exactly 7 sections (Introduction, Benefits, Scientific Evidence with 3 statistics, A 5-step bullet-point routine, 4 common mistakes with explanations, and a 100-word conclusion), keep paragraphs under 80 words and include bullet points where required.”

Simpler prompt

“Write a helpful article about why morning routines improve productivity. Include a few practical examples.”

The second prompt gives the AI direction without unnecessary constraints.

Structure can absolutely be useful — especially when you need specific formats like outlines, lists, or summaries. But adding structure just because you saw it in a prompt template often does more harm than good.

The better approach is simple:

Use structure when it serves the goal, not when it complicates it.

4. Treating AI Like a Mind Reader

AI understands patterns, not you. It doesn’t know your skills, your budget, or your brand voice unless you tell it.

Because AI often writes in a confident and conversational tone, it can feel like the response is uniquely designed for your situation. In reality, the AI is generating answers based on patterns from large amounts of data, not a deep understanding of you or your specific circumstances.

For example, someone might ask: “What career should I choose?”

The AI might produce a thoughtful, well-written answer. It may even sound insightful. But it still doesn’t know important things like your skills, interests, financial situation, personality, or long-term goals.

If someone treats that answer as personalized advice, they may give it more weight than it deserves.

The same thing happens with things like: business advice, health tips, financial suggestions and productivity systems.

The responses can be helpful starting points, but they are not truly personal recommendations.

A better approach is to treat AI outputs as general guidance, not final decisions. Use them to explore ideas, gather options, or see different perspectives — then apply your own judgment and real-world context.

In short: AI can generate useful insights, but it doesn’t actually know you.

5. Misunderstanding AI Creativity

Another mistake beginners make is expecting AI to constantly produce completely original ideas.

Because AI can generate text quickly and confidently, it can feel like an endless source of creativity. But in reality, AI is not inventing ideas in the same way humans do. It works by recognizing patterns in existing information and recombining them in new ways.

That means the output is often a remix of common ideas, not something entirely new.

For example, someone might ask: “Give me a completely unique business idea that no one has ever thought of.”

The AI might suggest something interesting, but if you look closely, the idea is usually a variation of concepts that already exist.

The same thing happens with things like: blog topics, startup ideas, marketing strategies and product concepts.

AI can help generate lots of possibilities quickly, which is incredibly useful for brainstorming. But expecting it to produce groundbreaking originality on demand often leads to disappointment.

The better way to use AI is as a creative partner, not a replacement for human creativity. Let it help you generate rough ideas, angles, or starting points — then refine and develop them with your own thinking.

In other words: AI is great at sparking ideas, but true originality still comes from humans.

6. Assuming AI Will “Figure It Out”

If you tell an AI to “make this better,” it has to guess what you mean. Do you want it shorter? More professional? Funnier?

Humans are surprisingly good at interpreting vague directions. If you tell a coworker, “Make this sound better,” they can usually guess what you mean by looking at the context.

AI doesn’t work the same way.

When instructions are ambiguous, the model has to interpret the request on its own, which can lead to results that don’t match what you had in mind.

For example, someone might write: “Improve this paragraph.”

But what does improvement actually mean?

- Make it shorter?

- Make it more persuasive?

- Simplify the language?

- Fix grammar?

- Change the tone?

Since the instruction is open to interpretation, the AI might change things you didn’t want changed or ignore the improvement you were actually hoping for.

Now compare that with a clearer instruction:

“Rewrite this paragraph to make it shorter and easier to read while keeping the main idea the same.”

This removes the ambiguity and gives the AI a clear goal to work toward.

A good rule to remember is simple:

If an instruction could mean several different things, AI has to guess.The clearer you are about what kind of change you want, the closer the output will match your expectations.

7. Asking Questions Without a Clear Goal

Information is useless without an objective. Asking “Tell me about digital marketing” gives you a textbook definition.

Because AI makes it so easy to ask anything, people often type broad questions just to see what comes back. The problem is that when the goal is unclear, the response can become informational but not particularly useful.

For example, someone might ask: “Tell me about digital marketing.”

That question can lead in dozens of directions. The AI might explain what digital marketing is, list a few channels, mention some tools, or briefly cover several strategies. The answer may be correct, but it often ends up too general to help with a real task.

Now compare that with a question that has a clear goal:

“Explain three beginner-friendly digital marketing strategies a small online store can use to get its first customers.”

Here, the AI understands what you’re trying to achieve, not just the topic you’re curious about.

This simple shift makes a big difference. Instead of getting a broad overview, you receive practical information that moves you closer to a specific outcome.

Before writing a prompt, it helps to quickly ask yourself one question:

What do I want to do with the answer?When the goal is clear, the AI can generate responses that are far more relevant and actionable.

Closing Thoughts

AI is powerful, but it isn’t magic.

The quality of the output almost always reflects the quality of the input. Vague prompts, unclear goals, and overloaded requests lead to weak results — while clear instructions and focused questions produce far better responses.

The difference between frustrating AI results and genuinely useful ones often comes down to a few small habits: being specific, asking one thing at a time, and giving the AI enough context to work with.

Once you understand this, everything changes. Instead of hoping the AI will guess what you want, you learn how to guide it toward better answers.

And that’s the real shift beginners make. Not just using AI, but learning how to work with it.